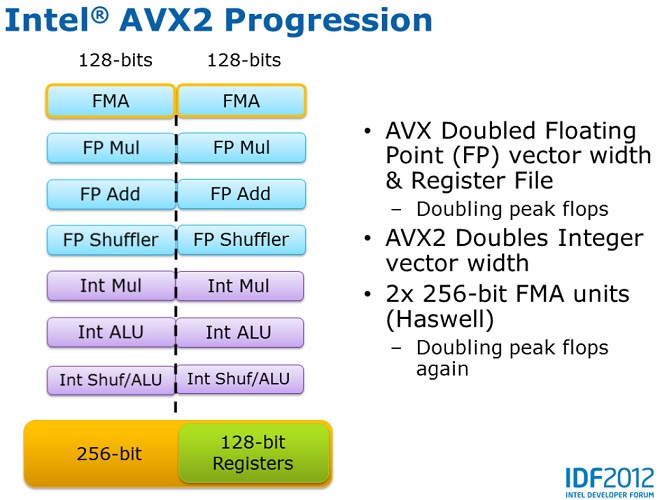

For the i7 7700HQ Kaby Lake CPU I found FLOPS values as low as 29 GFLOPS even though the formula above gives us 358 GFLOPS. Am I doing it correctly at all? I couldn't find a single reliable source for calculating FLOPS, all the information on the internet is contradicting. What I don't understand is why not use threads (logical cores) instead? Weren't threads created specifically to double the floating point calculations performance? Why are we ignoring them then? Most of the guides I've seen (like this one) are using physical cores in the formula. GPU: TOTAL_FLOPS = 1.3 GHz * 768 cores * 2 FLOPS = 1996 GFLOPS Precision FP32) and Pascal cards compute 2 FLOPS (single precisionįP32), which means we can compute their total FLOPS performance using theĬPU: TOTAL_FLOPS = 2.8 GHz * 4 cores * 32 FLOPS = 358 GFLOPS This Wiki page says that Kaby Lake CPUs compute 32 FLOPS (single A Pascal GPU (clock: 1.3 GHz, cores: 768).A Kaby Lake CPU (clock: 2.8 GHz, cores: 4, threads: 8).The only library I could find is a 40 year old C library, written when advanced instructions didn't even exist yet.I'm trying to calculate CPU / GPU FLOPS performance but I'm not sure if I'm doing it correctly. Even when using C++, it seems (I don't actually know) we have to download the 2 GB CUDA toolkit just to get access to the Nvidia GPU information - which would make it practically impossible to make the app available for others, since no one would download a 2 GB app. Is there a cross-platform (Win, Mac, Linux) library in Node.js / Python / C++ that gets all the GPU stats like shading cores, clock, FP32 and FP64 FLOPS values so I could calculate the performance myself, or a library that automatically calculates the max theoretical FP32 and FP64 FLOPS performance by utilizing all available CPU / GPU instruction sets like AVX, SSE, etc? It's quite ridiculous that we cannot just get the FLOPS stats from the CPU / GPU directly, instead we have to download and parse a wiki page to get the value. What I don't understand is why not use threads (logical cores) instead? Weren't threads created specifically to double the floating point calculations performance? Why are we ignoring them then?Īm I doing it correctly at all? I couldn't find a single reliable source for calculating FLOPS, all the information on the internet is contradicting.

I'm trying to calculate CPU / GPU FLOPS performance but I'm not sure if I'm doing it correctly.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed